Introduction

I joined Mozilla just right before the A-Frame v0.1.0 release.

I was contributing to the design and implementation of the core, the A-Frame Inspector, demos (A-Painter, A-Blast, A-Saturday-night, ...), as well as several components.

In this page I would like to share some of the components I was working on.

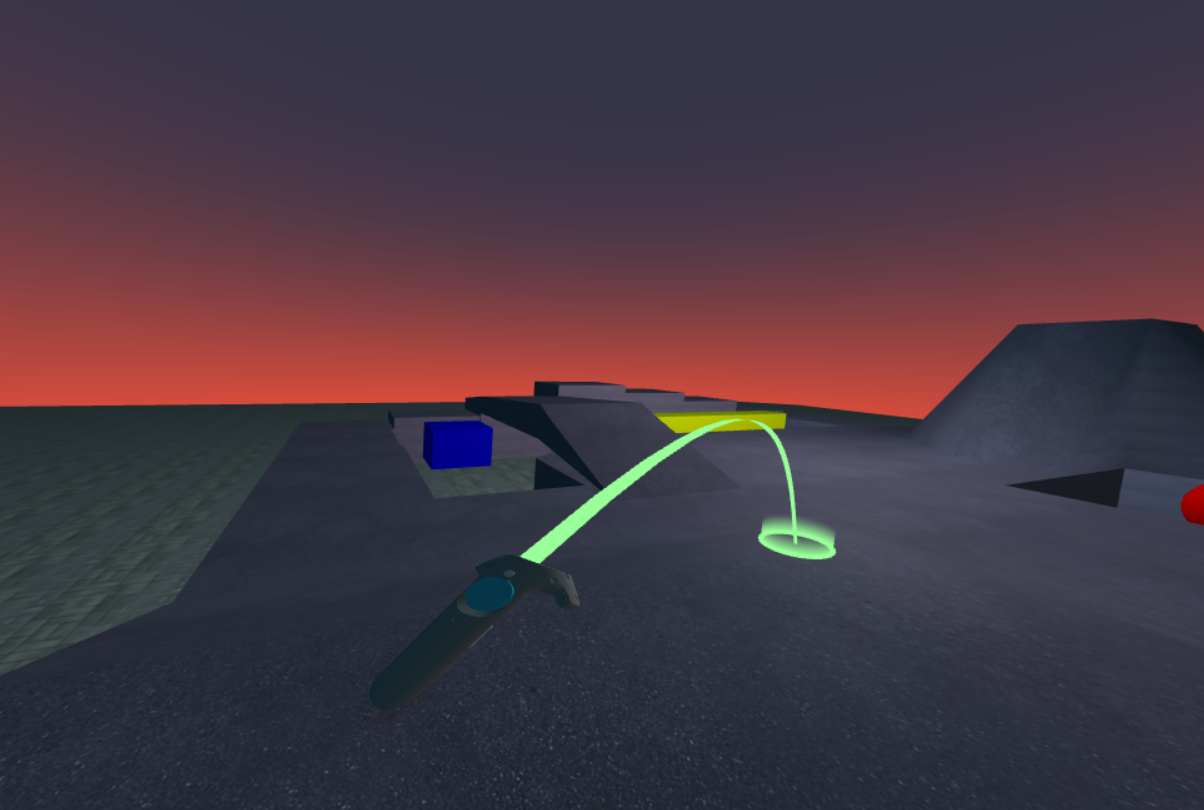

Teleport component

This is probably one of the most useful components I created. I used it almost in every example or demo I worked on, eg: A-Painter. It is heavily inspired by the The Lab's teleport by Valve.

It works both with 6dof and 3dof controllers.

For more information and implementation details please make sure you check this article.

Camera transform controls

I created this component inspired by some VR editor prototypes like Unity or Unreal.

The component provides three ways to interact with the scene:

- Translate: Pressing a button (

gripby default) in one controller while moving it, will move the entire scene. - Scale: Pressing the same button (

gripby default) in both controllers and modifying the distance between them will scale the scene. - Rotate: Also pressing the button in both controller at the same time, but instead of moving them closer or further, you turn them around so the scene will be rotated.

While developing this component I started building a prototype in raw three.js too:

I also created the same interaction in Unity just to learn a bit about the XR API:

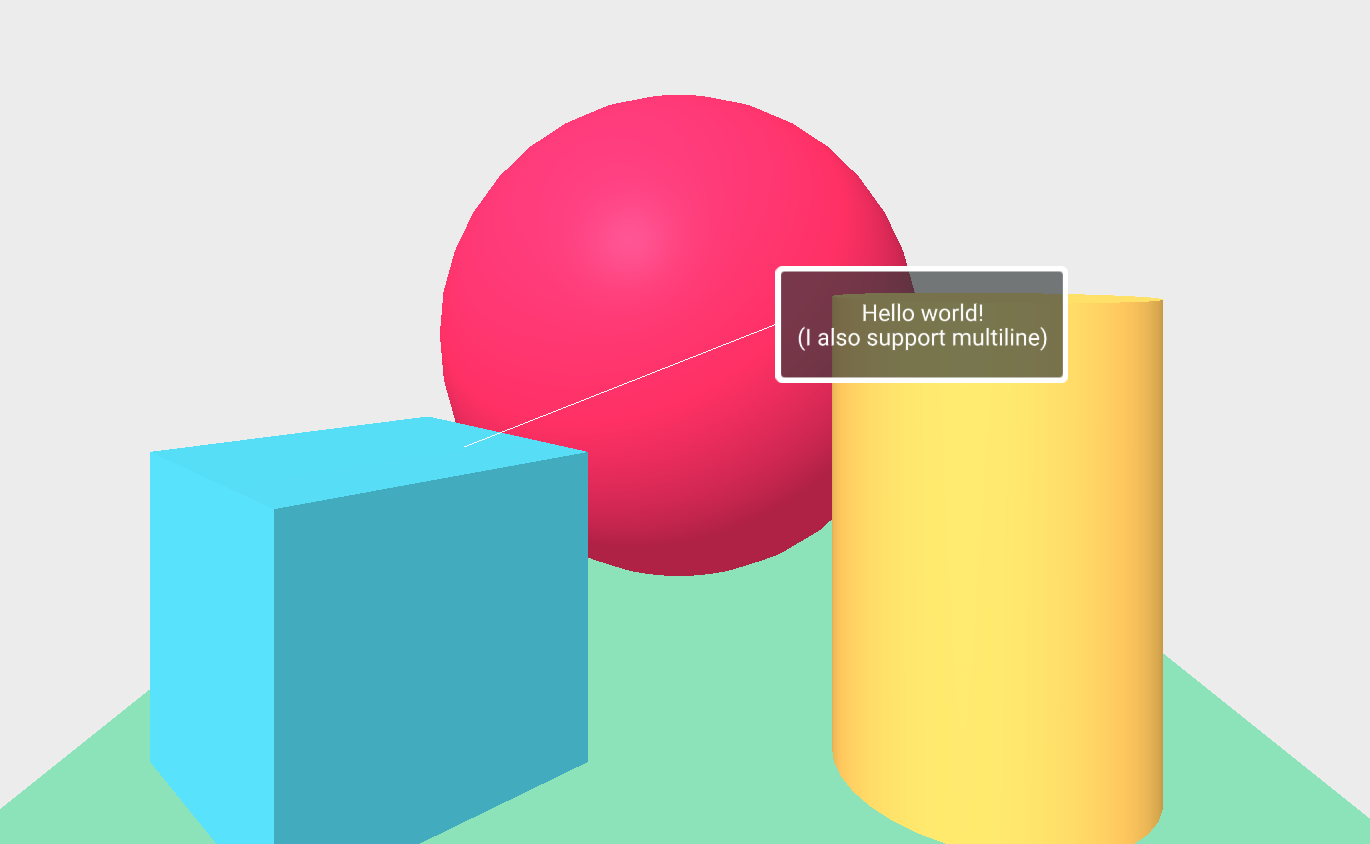

Tooltip

I created the tooltips component when working on the A-Painter application as we were using multiple buttons and we needed a way to tell users what does each button do.

The configuration is really simple, as you just define the text, width and size of the tooltip and the offset position. The component will create the line and the box, and it will be always be facing the camera.

Slice9

Another useful component when creating UI. Previously we were basically using the same size for each button as otherwise the button texture will get distorted, but with this component you can define a texture and the offset of each corner and it will keep the proportions no matter which size the button will have.

4DOF

A 4dof Controls component for A-Frame based on the original idea in the Thinking Beyond a Rotation-Only Controller talk. It is based in the assumption that you usually don't rotate your wrist in the Z axis, so it maps that rotation to the position's Z coordinate.

In the following video you can see experimental 4dof support added to A-Painter:

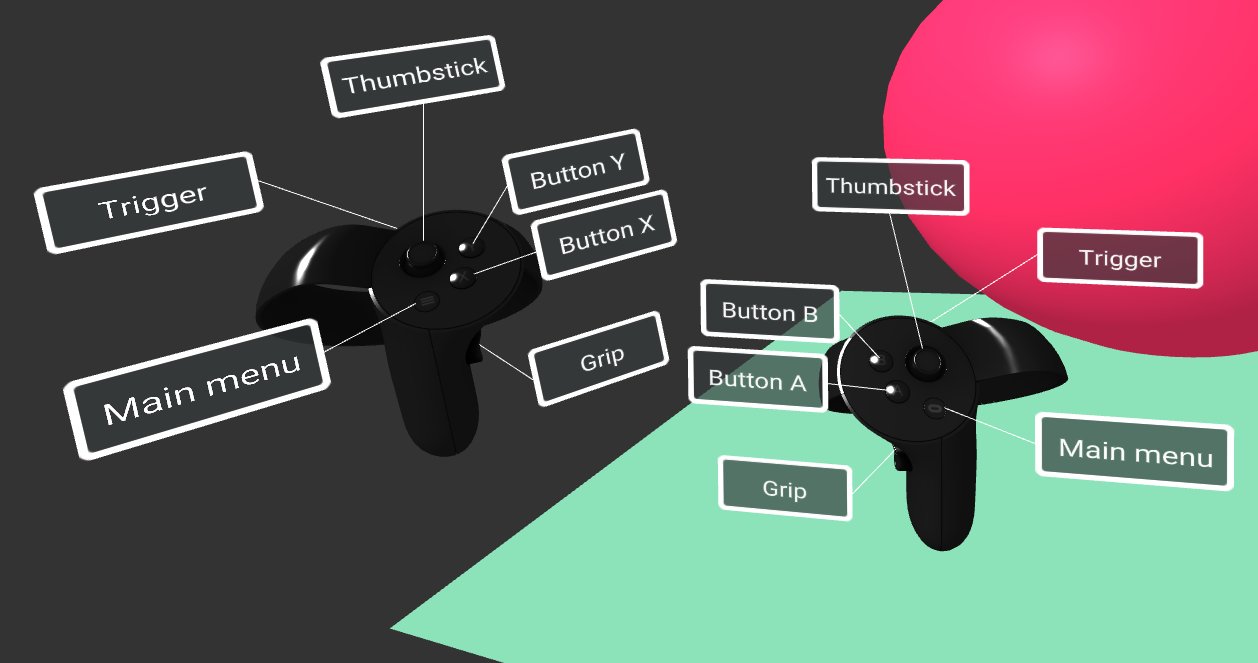

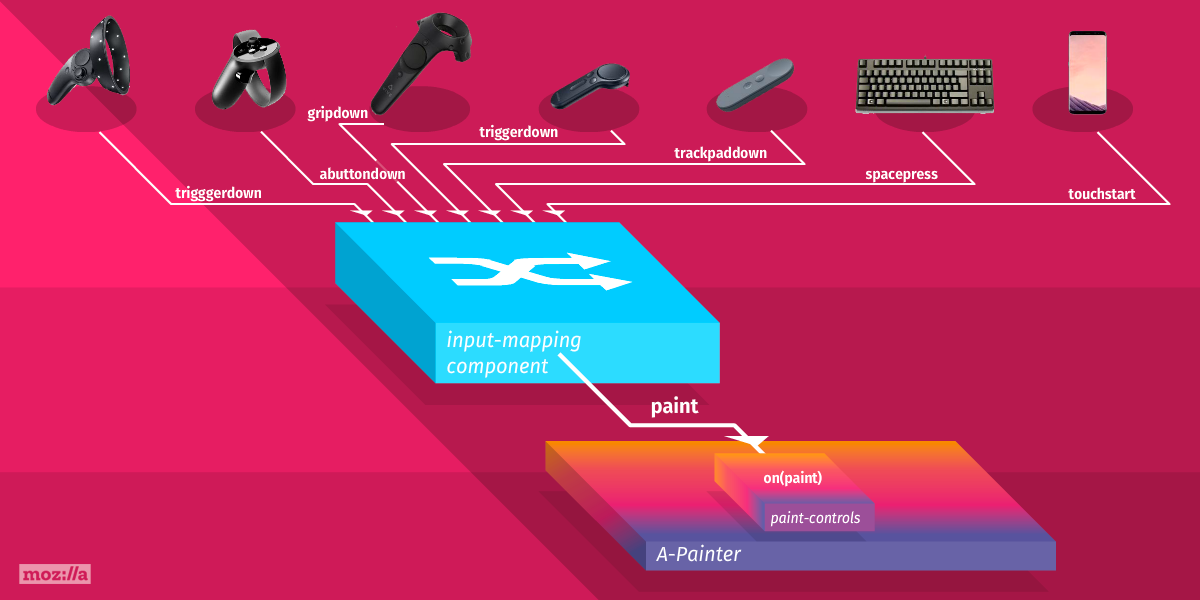

Input mapping

When we were working on WebXR applications like A-Painter, A-Blast or similar, that were designed to work across different hardware, we realized the importance of having a convenient way to map inputs to application-specific actions. It used to be that Vive controllers were the only hand input available so our logic was hard wired to that. As Oculus, Google, and Microsoft started releasing their platforms, we needed a scalable and convenient solution to support multiple input methods. Inspired by the Steam Controller API (This talk is a great introduction), I developed a more convenient way to map inputs to application-specific actions.

Curently, A-Frame supports most of the major VR devices out there: Vive, Oculus Rift and Quest, Microsoft WMR, and many more. You don’t have to deal with controller IDs or identify which indices correspond to which button. We still need to manually map particular actions to each of the controller inputs.

This component aimed to solve these issues by mapping input to actions, so in your application you will be checking for playerJump, playerKick instead of buttonA or touchPadPressed.

Apart from the basic touched, down, up, pressed, it support a wide range of other events such as longpress, or doublepress.

For more info please read this article.

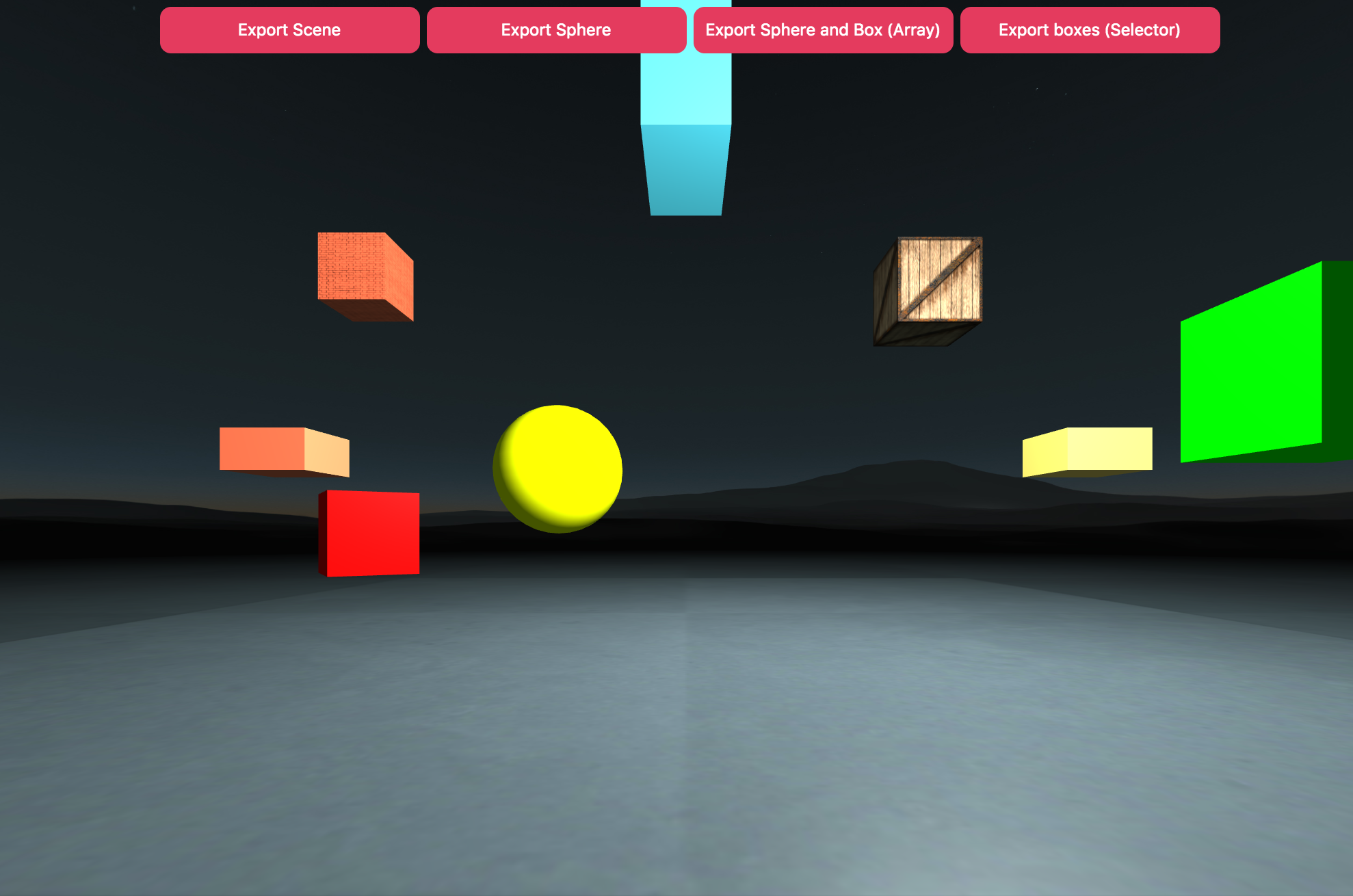

glTF Exporter component

When I was working on adding glTF export support for three.js I created also a component for A-Frame to make the process of exporter your scene or specific entities to glTF as simple as possible.

You just need to add the gltf-exporter component to your scene and then call export on the node you want to export:

sceneEl.systems['gltf-exporter'].export(input, options); Uploadcare

This one is not that fancy or visual, but it was super useful for us, as most of our applications stored something in the cloud, like drawings in A-Painter or your dancing moves in A-Saturday-night or when uploading an asset in the A-Frame inspector.